Concerns about user privacy have accompanied the rise of AI in recent years. As AI takes on critical roles in analytics and decision-making across sectors like finance and healthcare, a lack of confidence can quickly undermine its credibility. The challenge is clear: how can we rely on AI systems without exposing sensitive data or proprietary models?

Verifiable AI has emerged as a promising approach to address this verification gap while safeguarding data privacy. Companies like ARPA Network are exploring Verifiable AI using privacy-preserving Zero-Knowledge Proofs (ZKPs) to enable independent verification of AI outputs without exposing sensitive data.

AI Has a Privacy Problem

Modern AI models have transformed applications in natural language processing, image recognition, and recommendation systems. However, as these models become increasingly sophisticated, a profound challenge arises: How to verify their accuracy or correctness without exposing proprietary model parameters or leaking sensitive data?

Organizations often invest significant resources in training proprietary models, making them valuable intellectual property. At the same time, users increasingly demand transparency and assurance that these systems perform as claimed. These goals often conflict.

Disclosing internal model details risks exposing trade secrets or sensitive training data. Yet withholding them makes it difficult for users to evaluate whether a model is reliable or accurate.

Complaints about a lack of user privacy are not new. In ‘The Age of Surveillance Capitalism’, Shoshana Zuboff writes how companies extract value from “human experience as free raw material for translation into behavioral data.” In the AI era, this dynamic has only intensified.

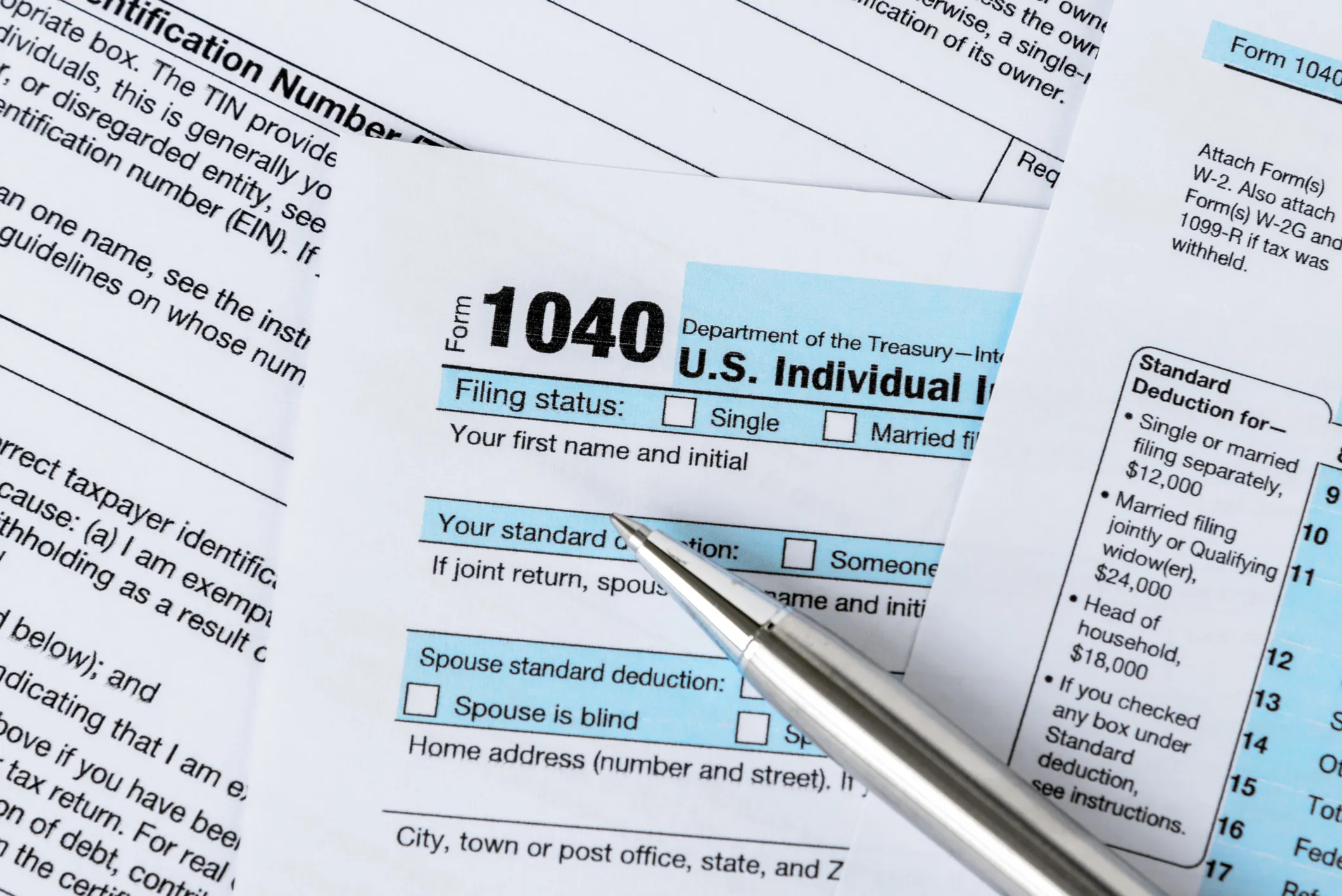

Researchers have shown that AI models can, under certain conditions, memorize and reproduce fragments of their training data, raising concerns about unintended data leakage. At the same time, the use of publicly available or scraped data to train models continues to fuel debates around consent and data ownership. For example, patients have found their private records in AI output, and user data was used to train models without explicit consent.

Academic research has also highlighted broader risks, including sensitive attribute inference, identity exposure, and other forms of privacy harm that can affect individuals economically, socially, and psychologically. A Stanford University paper has highlighted how AI exacerbates privacy concerns by creating new risks, including identity theft, wrong inference, and the exposure of sensitive information. Another study by Danielle Citron and Daniel Solove discusses the multiple aspects of ‘privacy harms’ affecting an individual’s physical, economic, reputational, emotional, and relational aspects.

This creates a trust dilemma. AI systems are becoming indispensable, but users need evidence that they work as intended without compromising their privacy. So how can we verify AI outputs without revealing how the model works or exposing the data behind it?

Verifiable AI Plugs AI’s Privacy Gaps

Verifiable AI refers to systems that generate cryptographic proofs allowing independent parties to confirm that an AI model’s output was produced correctly. Rather than asking users to blindly trust a system, these approaches provide a way to verify its behavior.

A key technology enabling this is Zero-Knowledge Proofs (ZKPs). ZKPs allow one party to prove that a computation was performed correctly without revealing any additional information about the inputs or the model itself. In the context of AI, this means a model can produce an output along with a proof that the computation followed a defined process without exposing sensitive data or proprietary logic.

Projects such as ARPA Network are actively exploring how Verifiable AI can be applied in areas like identity management, data analytics, and Web3 applications. By leveraging techniques such as Zero-Knowledge Machine Learning (ZKML), these efforts aim to enable verifiable AI computation in decentralized environments while preserving privacy.

In blockchain-based systems, ZK-powered mechanisms can also help ensure that data inputs and outputs are trustworthy without revealing the underlying information. Techniques such as ZK-SNARKs allow verifiers to confirm that a computation was executed correctly and has not been tampered with while preserving both data privacy and intellectual property.

For example, healthcare organizations could use sensitive patient data to train AI models while providing verifiable assurances that outputs are computed correctly without exposing identifiable information. Similarly, financial institutions could deploy AI systems that make decisions based on protected credit data, while still allowing those decisions to be independently verified.

While Verifiable AI offers a compelling path forward, it is still an emerging field. Generating cryptographic proofs for complex AI models can be computationally intensive, and many ZKML frameworks are still in early stages of development. As a result, large-scale, real-world deployment remains limited for now.

However, progress in both AI and cryptography is rapid. As these technologies mature, the cost and complexity of verifiable systems are expected to decrease, making them more practical for broader adoption.

Looking Ahead

With AI projected to contribute trillions of dollars to the global economy by 2030, building reliable and verifiable systems among users, organizations, and regulators is essential. Verifiable AI represents an important step in that direction and offers a way to ensure transparency and accountability without sacrificing privacy.

By enabling systems that can be independently verified yet remain secure, Verifiable AI has the potential to reshape how trust is established in the age of intelligent machines.

Source link

#Verifiable #Future #Trust #European #Financial #Review